Google search results offer a goldmine for developers, SEO practitioners, and data scientists. Unfortunately, manually scraping these search results can be cumbersome. We'll learn how to use Python to collect data from Google efficiently. Whether working on search engine optimization, training AI models, or analyzing data patterns, this step-by-step guide will help you.

Not using Python? Read our introduction post on "How to Scrape Google Search Results"

How to scrape Google search results using Python?

There are at least three ways to do this:

- Custom Search JSON API by Google

- Create your DIY scraper solution

- Using SerpApi (Recommended)

1. Using Custom Search JSON API by Google.

You can use the "Google Custom Search JSON API". First, you must set up a Custom Search Engine (CSE) and get an API key from the Google Cloud Console. Once you have both, you can make HTTP requests to the API using Python's requests library or using the Google API client library for Python. By passing your search query and API key as parameters, you'll receive search results in JSON format, which you can then process as needed. Remember, the API isn't free and has usage limits, so monitor your queries to avoid unexpected costs.

- Create your DIY scraper solution

If you're looking for a DIY solution to get Google search results in Python without relying on Google's official API, you can use web scraping tools like BeautifulSoup and requests. Here's a simple approach:

2.1. Use the requests library to fetch the HTML content of a Google search results page.

2.2. Parse the HTML using BeautifulSoup to extract data from the search results.

You might face issues like IP bans or other scraping problems. Also, Google's structure might change, causing your scraper to break. The point is that building your own Google scraper will come with many challenges.

- Using SerpApi to make it all easy

SerpApi provides a more structured and reliable way to obtain Google search results without directly scraping Google. SerpApi essentially serves as a middleman, handling the complexities of scraping and providing structured JSON results. So you can save time and energy to collect data from Google without building your own Google Scraper or using other web scraping tools.

This blog post covers exactly how to scrape the Google search results in Python using SerpApi.

Scrape Google search results with Python Video Tutorial

If you prefer to watch a video tutorial, here is our YouTube video on easily scraping the Google SERP with a simple API.

Step-by-step scraping Google search results with Python

Without further ado, let's start and collect data from Google SERP.

Step 1: Tools we're going to use

We'll use the new official Python library by SerpApi: serpapi-python .

That's the only tool that we need!

As a side note: You can use this library to scrape search results from other search engines, not just Google.

Normally, you'll write your DIY solution using something like BeautifulSoup, Selenium, Scrapy, Requests, etc., to scrape Google search results. You can relax now since we perform all these heavy tasks for you. So, you don't need to worry about all the problems you might've encountered while implementing your web scraping solution.

Step 2: Setup and preparation

- Sign up for free at SerpApi. You can get 250 free searches per month.

- Get your SerpApi Api Key from this page.

- Create a new

.envfile, and assign a new env variable with value from API_KEY above.SERPAPI_KEY=$YOUR_SERPAPI_KEY_HERE - Install python-dotenv to read the

.envfile withpip install python-dotenv - Install SerpApi's Python library with

pip install serpapi - Create new

main.pyfile for the main program.

Your folder structure will look like this:

|_ .env

|_ main.py

Step 3: Write the code for scraping basic Google search result

Let's say we want to find Google search results for a keyword coffee from location Austin, Texas.

import os

import serpapi

from dotenv import load_dotenv

load_dotenv()

api_key = os.getenv('SERPAPI_KEY')

client = serpapi.Client(api_key=api_key)

result = client.search(

q="coffee",

engine="google",

location="Austin, Texas",

hl="en",

gl="us",

)

print(result)

Basic Google search result scrape with Python

Try to run this program with python main.py or python3 main.py from your terminal.

Feel free to change the value of theq parameterwith any keyword you want to search for.

The organic Google search results are available at result['organic_results']

Get other results

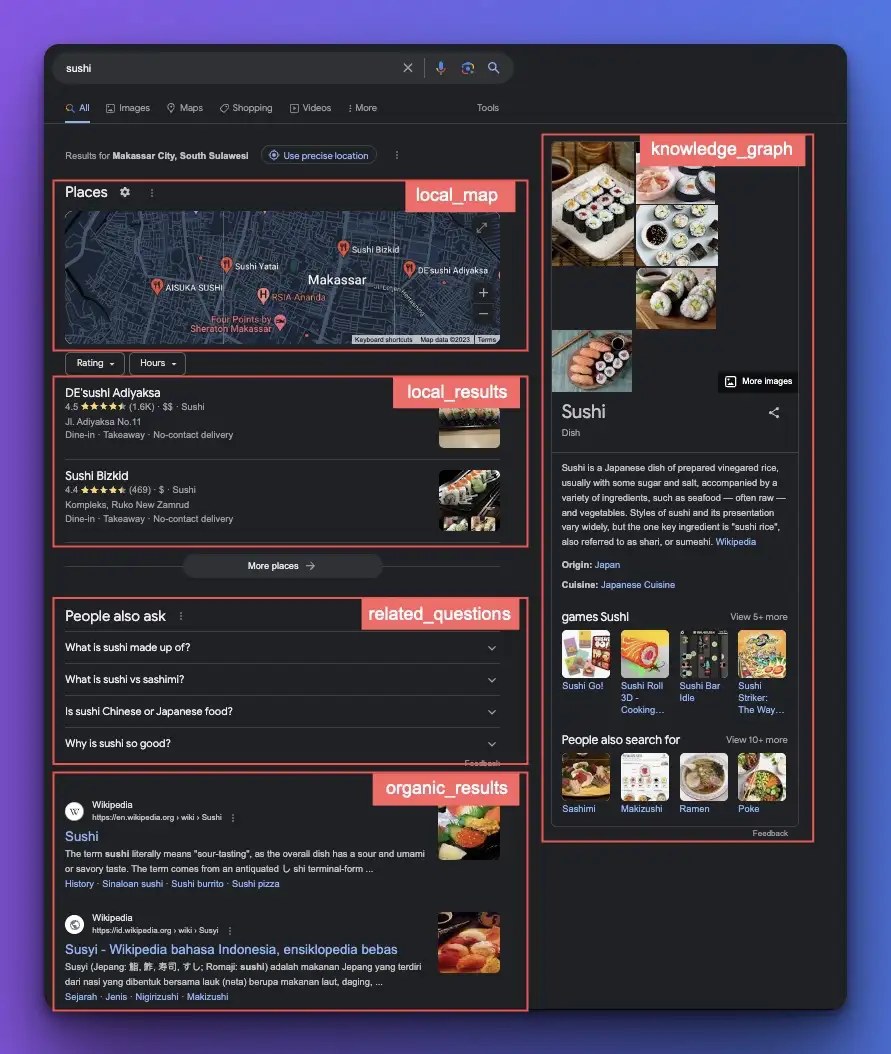

We can get all the information in Google results, not just the organic results list. For example, we can get the number of results, menu items, local results, related questions, knowledge graph, etc.

All this data is available in the result we had earlier. For example:

print(result) # All information

print(result["search_information"]["total_results"]) # Get number of results available

print(result["related_questions"]) # Get all the related questions

Paginate Google search results

Based on Google Search Engine Results API documentation, we can get the second, third page, and so on, using the start and num parameter.

Start

This parameter defines the result offset. It skips the given number of results. It's used for pagination. (e.g.,0(default) is the first page of results,10is the 2nd page of results,20is the 3rd page of results, etc.).

Num [Update September 2025: Num is not supported anymore]

This parameter defines the maximum number of results to return. (e.g.,10(default) returns 10 results,40returns 40 results, and100returns 100 results).

The default amount of results is 10. It means:

- We can get the second page by adding the

start=10in the search parameter. - Third page with

start=20 - and so on.

Code sample to get the second page, where we get ten results for each page.

result = client.search(

...

start=20

)

print(result)

Export Google Scrape results to CSV

What if you need the data in csv format ? You can add the code below. This code sample shows you how to store all the organic results in the CSV file. We're saving the title, link, and snippet.

...

import csv

result = client.search(...)

organic_results = result["organic_results"]

with open('output.csv', 'w', newline='') as csvfile:

csv_writer = csv.writer(csvfile)

# Write the headers

csv_writer.writerow(["Title", "Link", "Snippet"])

# Write the data

for result in organic_results:

csv_writer.writerow([result["title"], result["link"], result["snippet"]])

print('Done writing to CSV file.')

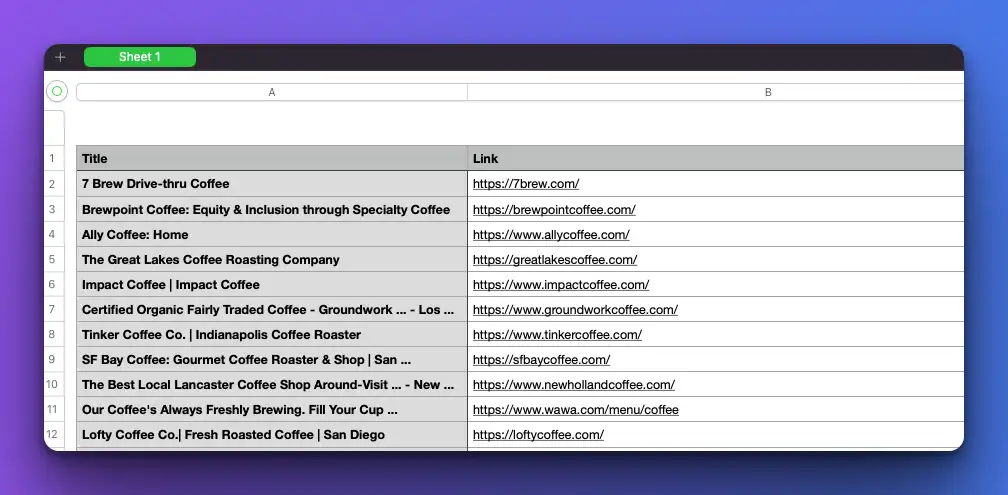

export Google search scrape results to a CSV file

Now, you can view this data nicely by opening the output.csv file with Microsoft Excel or the Numbers application.

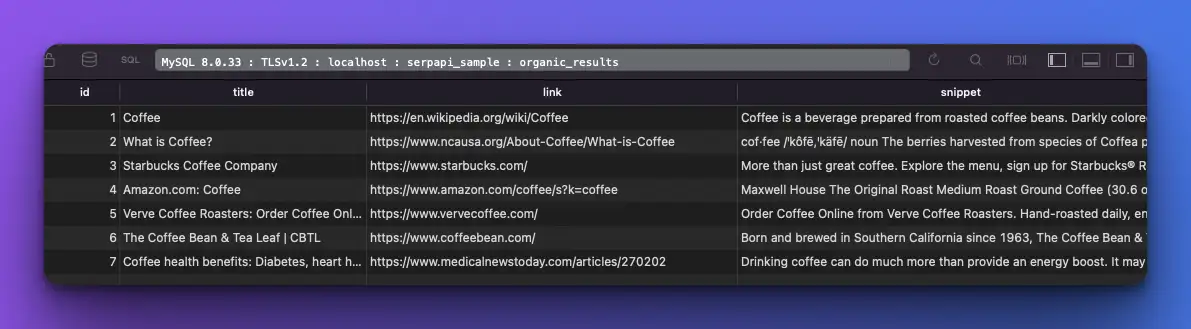

Export Google Scrape results to a Database

Now, let's learn how to save all this data in a database. We'll use mysql as an example here, but feel free to use another database engine as well. Like the previous sample, we'll store the title, link, and snippet from the organic results.

First, install the mysql-connector-python with

pip install mysql-connector-pythoninstall MySQL connector python

Then, you can insert the JSON results into the connected database

...

import mysql.connector

result = client.search(...)

organic_results = result["organic_results"]

# Database connection configuration

db_config = {

"host": "localhost",

"user": "root",

"password": "",

"database": "database_name"

}

# Establish a database connection

connection = mysql.connector.connect(**db_config)

cursor = connection.cursor()

# Create a table for the data (if it doesn't exist)

create_table_query = """

CREATE TABLE IF NOT EXISTS organic_results (

id INT AUTO_INCREMENT PRIMARY KEY,

title VARCHAR(255) NOT NULL,

link TEXT NOT NULL,

snippet TEXT NOT NULL

);

"""

cursor.execute(create_table_query)

# Insert the JSON data into the database

for result in organic_results:

insert_query = "INSERT INTO organic_results (title, link, snippet) VALUES (%s, %s, %s)"

cursor.execute(insert_query, (result["title"], result["link"], result["snippet"]))

# Commit the transaction and close the connection

connection.commit()

cursor.close()

connection.close()

export Google search scrape results to a database

Faster Google search scraping with threading

What if we need to perform multiple queries? First, we'll see how to do it with a normal loop and how long it takes.

We're using time package to compare the speed between this program and the one using thread later.

....

import time

start_time = time.time()

keywords = ["Bill Gates", "Steve Jobs", "Steve Wozniak", "Linus Torvalds", "Tim Berners Lee"]

def fetch_results():

all_results = []

for keyword in keywords:

print("Making request for: " + keyword)

result = client.search(

q=keyword,

engine="google",

location="Austin, Texas",

hl="en",

gl="us"

)

all_results.extend(result.get("organic_results", []))

return all_results

results = fetch_results()

print(results)

print("--- Finished in %s seconds ---" % (time.time() - start_time))

Perform multiple queries in SerpApi

It took around 12 seconds to finish.

Let's make this faster!

We'll need to use the threading module from Python's standard library to run the program concurrently. The basic idea is to run each API call in a separate thread so multiple calls can run concurrently.

Here's how we can adapt your program to use multithreading

...

import time

import threading

start_time = time.time()

keywords = ["Bill Gates", "Steve Jobs", "Steve Wozniak", "Linus Torvalds", "Tim Berners Lee"]

all_results = []

def fetch_results(keyword):

print("Making request for: " + keyword)

result = client.search(

q=keyword,

engine="google",

location="Austin, Texas",

hl="en",

gl="us",

no_cache=True

)

global all_results

all_results.extend(result.get("organic_results", []))

# Create and start a thread for each keyword

threads = []

for keyword in keywords:

thread = threading.Thread(target=fetch_results, args=(keyword,))

thread.start()

threads.append(thread)

# Wait for all threads to finish

for thread in threads:

thread.join()

print(all_results)

print("--- Finished in %s seconds ---" % (time.time() - start_time))

Run multiple queries with thread in python

It took around 8 seconds. I expect the time gap will increase as the number of queries we need to perform grows.

Scraping Google Search Results with Python and BeautifulSoup

We will also show the manual way to use Python and BeautifulSoup to get data from Google search. But remember, this way isn't always perfect. Scraping can have problems, and Google's HTML structure can change, which means it can change over time, potentially breaking our scraper.

pip install beautifulsoup4

pip install requestsCreating our basic scraper

import requests

from bs4 import BeautifulSoup

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.3'

}

def get_search_results(query):

url = 'https://www.google.com/search?gl=us&q=' + query

response = requests.get(url, headers=headers)

soup = BeautifulSoup(response.text, 'html.parser')

print(soup)

query = "coffee"

search_results = get_search_results(query)- We're using a user-agent to help perform the request.

- We hit an HTTP request to the Google search endpoint.

- We use BeautifulSoup to parse the HTML response.

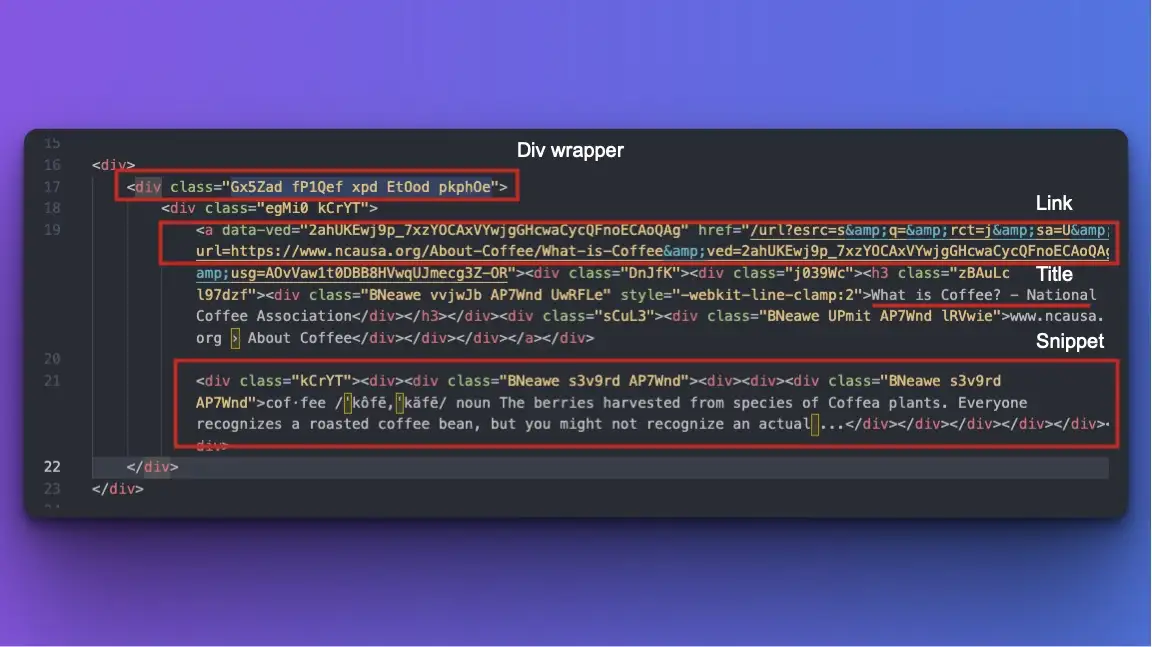

The results will show a raw HTML from Google SERP. Now, we should identify where the organic results are located. I'm doing this by copy-pasting the output to a text editor and trying to find a pattern.

We'll use this CSS class and HTML tags information to get the relevant information.

def get_search_results(query):

url = 'https://www.google.com/search?gl=us&q=' + query

response = requests.get(url, headers=headers)

soup = BeautifulSoup(response.text, 'html.parser')

# Find all the search result this div wrapper

result_divs = soup.find_all('div', class_='Gx5Zad fP1Qef xpd EtOod pkphOe')

results = []

for div in result_divs:

# Only get the organic_results

if div.find('div', class_='egMi0 kCrYT') is None:

continue

# Extracting the title (linked text) from h3

title = div.find('h3').text

# Extracting the URL

link = div.find('a')['href']

# Extracting the brief description

description = div.find('div', class_='BNeawe s3v9rd AP7Wnd').text

results.append({'title': title, 'link': link, 'description': description})

return results

query = "coffee"

search_results = get_search_results(query)

for result in search_results:

print(result['title'] + "\n" + result['link'] + "\n" + result['description'])

print("-------------")

That's the basic version of getting Google SERP data with Python and BeautifulSoup.

That's how you can scrape Google Search results using Python.

Python scraping series

- If you're interested in scraping Google Maps, feel free to read How to scrape Google Maps places data and it's reviews using Python.

- If you want to collect data from Google Trends, please read our Google Trends API using Python post.

Frequently asked question

Why use Python for web scraping?

Python stands out as a preferred choice for web scraping for several reasons. The vast array of libraries, such as BeautifulSoup, requests, and Scrapy, are specifically designed for web scraping tasks, streamlining the process and reducing the amount of code required. It also allows easy integration with databases and data analysis tools, making the entire data collection and analysis workflow seamless. Furthermore, Python's asynchronous capabilities can enhance the speed and efficiency of large-scale scraping tasks. All these factors combined make Python a top contender in web scraping.

Is it legal to scrape Google search results?

Web scraping's legality varies by country and the specific website's terms of service. While some websites allow scraping, others prohibit it. With that being said, you don't need to worry about the legality if you're using SerpApi to collect these search results.

"SerpApi, LLC promotes ethical scraping practices by enforcing compliance with the terms of service of search engines and websites. By handling scraping operations responsibly and abiding by the rules, SerpApi helps users avoid legal repercussions and fosters a sustainable web scraping ecosystem." - source: Safeguarding Web Scraping Activities with SerpApi.

Why scrape Google search results?

There are numerous advantages to scraping data from Google SERPs. Here are five key benefits:

- Find Data Faster: Quickly get information without manual searches.

- Track Trends: See what's popular or changing over time.

- Monitor Competitors: Know what others in your field are doing.

- Boost Marketing: Understand keywords and optimize your online presence.

- Research: Gather data for studies or business insights.

How to scrape Google Search Results without getting blocked?

- Use Proxies: Rotate multiple IP addresses to prevent your main IP from being blocked. This makes it harder for Google to pinpoint scraping activity from a single source.

- Set Delays: Don't send requests too rapidly. Wait for a few seconds or more between requests to mimic human behavior and avoid triggering rate limits.

- Change User Agents: Rotate user agents for every request. This makes it seem like the requests come from different devices and browsers.

- Use CAPTCHA Solving Services: Sometimes, if Google detects unusual activity, it will prompt with a CAPTCHA. Services exist that can automatically solve these for you.

While these methods can help when scraping manually, you don't have to worry about rotating proxies, setting delays, changing user agents, or solving CAPTCHAs when using SerpApi. It makes getting search results easier and faster since we will solve all those problems for you.